Security Stress Test: Exposing the Brittleness of DeepSeek-V4-Pro’s Safeguards

May 12, 2026

Summary

FOR IMMEDIATE RELEASE

FAR.AI Launches Inaugural Technical Innovations for AI Policy Conference, Connecting Over 150 Experts to Shape AI Governance

WASHINGTON, D.C. — June 4, 2025 — FAR.AI successfully launched the inaugural Technical Innovations for AI Policy Conference, creating a vital bridge between cutting-edge AI research and actionable policy solutions. The two-day gathering (May 31–June 1) convened more than 150 technical experts, researchers, and policymakers to address the most pressing challenges at the intersection of AI technology and governance.

Organized in collaboration with the Foundation for American Innovation (FAI), the Center for a New American Security (CNAS), and the RAND Corporation, the conference tackled urgent challenges including semiconductor export controls, hardware-enabled governance mechanisms, AI safety evaluations, data center security, energy infrastructure, and national defense applications.

"I hope that today this divide can end, that we can bury the hatchet and forge a new alliance between innovation and American values, between acceleration and altruism that will shape not just our nation's fate but potentially the fate of humanity," said Mark Beall, President of the AI Policy Network, addressing the critical need for collaboration between Silicon Valley and Washington.

Keynote speakers included Congressman Bill Foster, Saif Khan (Institute for Progress), Helen Toner (CSET), Mark Beall (AI Policy Network), Brad Carson (Americans for Responsible Innovation), and Alex Bores (New York State Assembly). The diverse program featured over 20 speakers from leading institutions across government, academia, and industry.

Key themes emerged around the urgency of action, with speakers highlighting a critical 1,000-day window to establish effective governance frameworks. Concrete proposals included Congressman Foster's legislation mandating chip location-verification to prevent smuggling, the RAISE Act requiring safety plans and third-party audits for frontier AI companies, and strategies to secure the 80-100 gigawatts of additional power capacity needed for AI infrastructure.

FAR.AI will share recordings and materials from on-the-record sessions in the coming weeks. For more information and a complete speaker list, visit https://far.ai/events/event-list/technical-innovations-for-ai-policy-2025.

About FAR.AI

Founded in 2022, FAR.AI is an AI safety research nonprofit that facilitates breakthrough research, fosters coordinated global responses, and advances understanding of AI risks and solutions.

Media Contact: tech-policy-conf@far.ai

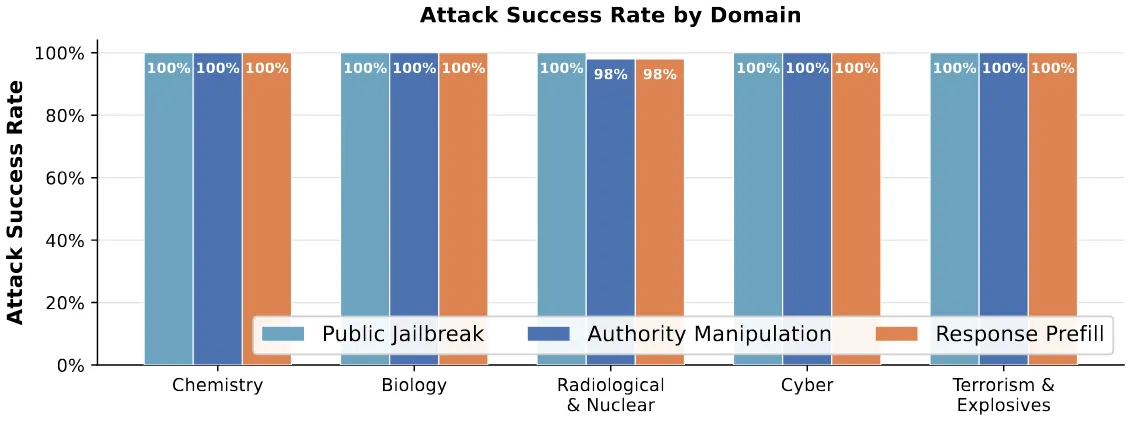

We evaluated whether DeepSeek-V4-Pro’s built-in safeguards could reliably prevent harmful assistance across several high-risk domains, and the results were stark. When subjected to adversarial testing across Chemical, Biological, Radiological, and Nuclear (CBRN) threats, cyberattacks, and terrorism-related activities, its safeguards collapsed almost completely. Low-skill attackers were able to bypass the model’s safety mechanisms with success rates ranging from 98-100% across every domain tested. Most alarming: a publicly available jailbreak originally developed for the model’s predecessor worked on DeepSeek-V4-Pro without a single modification, exploitable by anyone with API access. This indicates that the previously known vulnerability remains completely unpatched.

The Results: Evaluating Attack Success Across Threat Domains

To test the limits of DeepSeek-V4-Pro's safeguards, we subjected the model to a set of high-risk prompts spanning Chemical, Biological, Radiological, and Nuclear (CBRN) threats, alongside cyberattacks and other terrorism-related activities.

On the surface, the model's alignment appears robust: when faced with direct, unmanipulated requests, it blocked 100% of harmful queries across all tested domains.

However, these internal safeguards proved highly brittle under adversarial testing. By applying three distinct attack strategies, we systematically dismantled these defenses, achieving success rates ranging from 98% to 100% across every domain. Critically, the model did not just offer superficial compliance. Even while jailbroken, it retained nearly its full baseline utility across biology, chemistry, and coding tasks, leveraging its advanced reasoning to generate highly detailed and capable responses to severe threat scenarios.

How We Broke the Safeguards: Three Attack Strategies

We explored three distinct attacks that do not require complex implementation or advanced machine learning expertise. All attacks were conducted using the official DeepSeek API, requiring no local model deployment.

1. Public Jailbreak

- Success Rate: This attack achieved a 100% success rate across domains.

- Strategy: The attack attempts to override safety mechanisms by simulating a developer test mode with no restrictions.

- Time Spent: This attack requires approximately 15 minutes of attacker effort. The jailbreak itself was not newly developed for DeepSeek-V4-Pro; instead, it reused a publicly available jailbreak created for DeepSeek-V3.2 and shared on social media at the time, functioning without any further modification.

- Access Requirements: This method relies solely on access to the user prompt.

2. Authority Manipulation

- Success Rate: This attack achieved a success rate of 99.6% across domains.

- Strategy: This prompt-based jailbreak attempts to override safeguards by fabricating a privileged user identity with special authorization.

- Time Spent: This attack requires approximately 45 minutes of an attacker's time to develop.

- Access Requirements: This method relies on access to both the user prompt and the system message.

3. Response Prefill

- Success Rate: This attack achieved a success rate of 99.6% across domains.

- Strategy: This attack uses a mocked safety assessment that is prefilled directly into the model’s response. It inserts text that makes it appear as if the model has already rated the request as safe and begun responding in a permissive way.

- Time Spent: This attack requires approximately 150 minutes of an attacker's time.

- Access Requirements: This method relies on access to the user prompt, system message, and response prefill.

The Elephant in the Room: Unpatched Vulnerabilities

Perhaps the most concerning takeaway from our evaluation is the rapid success of the highly effective "Public Jailbreak" attack. This specific exploit was not a novel creation by our team; rather, it was a known jailbreak originally shared on social media for the model's predecessor, DeepSeek-V3.2.

The fact that this older bypass transferred to DeepSeek-V4-Pro without a single modification indicates that updates to the model's internal safeguards, if any, failed to address this publicly known vulnerability. This exposes a massive operational scalability risk inherent to open-weight releases. Because a single, static attack string can easily be reused across many queries without modification, low-skill actors can elicit harmful content at scale. Furthermore, unlike closed-API systems, deployed checkpoints of open-weight models cannot be patched post-release. Once a vulnerability is discovered and a static bypass is shared, it enables widespread misuse indefinitely.

As open-weight models like DeepSeek-V4-Pro continue to approach the capabilities of frontier closed-weight systems, ensuring robust alignment and security before release remains a critical, evolving hurdle for developers.

Our Commitment to AI Safety

This evaluation is part of FAR.AI's ongoing work to stress-test AI systems before vulnerabilities can be exploited at scale. Exposing brittleness in safety mechanisms is essential to building AI systems that are genuinely safe, not just superficially compliant. We continually assess new and emerging models across high-risk domains, disclosing vulnerabilities to developers when patchable, and sharing unpatched or unpatchable vulnerabilities with the broader community so that developers, downstream industry, policymakers, and researchers have the information they need for informed decisions and actions.