London Alignment Workshop 2026

March 18, 2026

Summary

FOR IMMEDIATE RELEASE

FAR.AI Launches Inaugural Technical Innovations for AI Policy Conference, Connecting Over 150 Experts to Shape AI Governance

WASHINGTON, D.C. — June 4, 2025 — FAR.AI successfully launched the inaugural Technical Innovations for AI Policy Conference, creating a vital bridge between cutting-edge AI research and actionable policy solutions. The two-day gathering (May 31–June 1) convened more than 150 technical experts, researchers, and policymakers to address the most pressing challenges at the intersection of AI technology and governance.

Organized in collaboration with the Foundation for American Innovation (FAI), the Center for a New American Security (CNAS), and the RAND Corporation, the conference tackled urgent challenges including semiconductor export controls, hardware-enabled governance mechanisms, AI safety evaluations, data center security, energy infrastructure, and national defense applications.

"I hope that today this divide can end, that we can bury the hatchet and forge a new alliance between innovation and American values, between acceleration and altruism that will shape not just our nation's fate but potentially the fate of humanity," said Mark Beall, President of the AI Policy Network, addressing the critical need for collaboration between Silicon Valley and Washington.

Keynote speakers included Congressman Bill Foster, Saif Khan (Institute for Progress), Helen Toner (CSET), Mark Beall (AI Policy Network), Brad Carson (Americans for Responsible Innovation), and Alex Bores (New York State Assembly). The diverse program featured over 20 speakers from leading institutions across government, academia, and industry.

Key themes emerged around the urgency of action, with speakers highlighting a critical 1,000-day window to establish effective governance frameworks. Concrete proposals included Congressman Foster's legislation mandating chip location-verification to prevent smuggling, the RAISE Act requiring safety plans and third-party audits for frontier AI companies, and strategies to secure the 80-100 gigawatts of additional power capacity needed for AI infrastructure.

FAR.AI will share recordings and materials from on-the-record sessions in the coming weeks. For more information and a complete speaker list, visit https://far.ai/events/event-list/technical-innovations-for-ai-policy-2025.

About FAR.AI

Founded in 2022, FAR.AI is an AI safety research nonprofit that facilitates breakthrough research, fosters coordinated global responses, and advances understanding of AI risks and solutions.

Media Contact: tech-policy-conf@far.ai

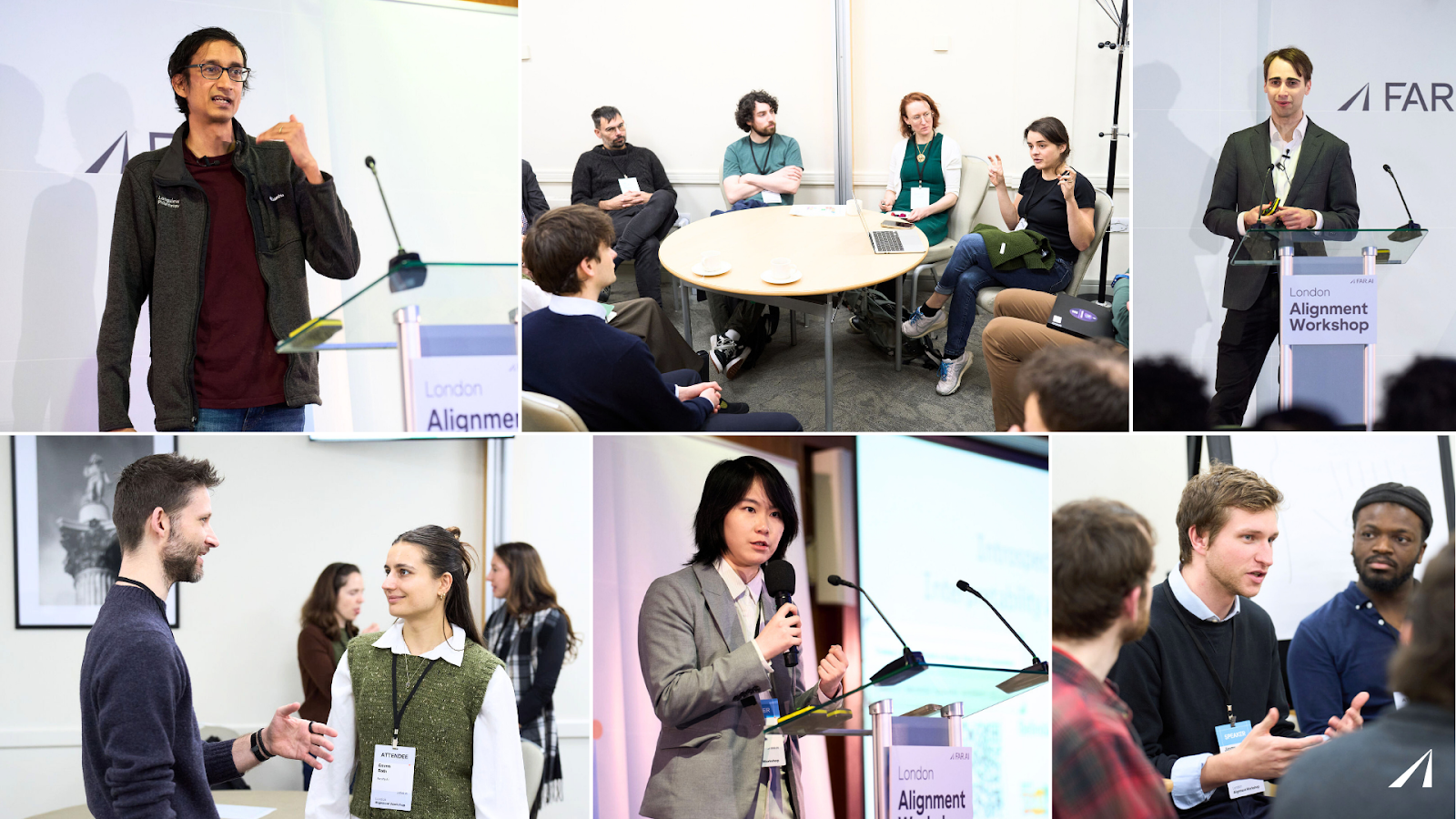

The London Alignment Workshop gathered more than 200 researchers, policymakers, and practitioners to work on a central challenge: frontier AI systems are advancing faster than the oversight institutions and standards needed to govern them. The program spanned interpretability, scalable oversight, evaluation methods, and governance frameworks, reflecting a field that is maturing from exploratory research toward concrete, tractable problems — among them, how to build auditable safety standards, design evaluations robust to adversarial conditions, and develop the institutional capacity required to oversee increasingly capable AI.

Advancing research on AI alignment, evaluation, and governance

AI capabilities are advancing faster than the institutions and standards needed to oversee them. Closing that gap was the problem the London Alignment Workshop kept returning to. FAR.AI hosted the event on March 2-3, 2026, bringing together more than 200 researchers, policymakers, and industry experts to share work and engage in conversations around interpretability, scalable oversight, evaluation methods, and governance frameworks.

The program reflected a field that is moving from open-ended research questions toward concrete problems: how to build safety standards that are auditable, how to design evaluations that hold up under adversarial conditions, and how to develop the institutional capacity needed to oversee increasingly capable systems.

Opening Talk & Keynotes

Keynote speakers examined how alignment research can better connect theory and practice, how safety standards might be developed and audited, and how institutions can build capacity to oversee increasingly powerful AI systems.

- Adam Gleave (FAR.AI) opened the workshop by arguing that the central obstacle to AI safety coordination is not the absence of solutions but rather the absence of a standard: without a shared, legible definition of what makes an AI system safe, companies and governments have no basis for holding each other accountable. Drawing on analogies from civil and nuclear engineering, he proposed that the field of AI adopt an engineering approach to safety built on three criteria: assurance, auditability, and efficiency. Gleave illustrated how this can be achieved through modularity, empirically validated rules of thumb, and rigorous end-to-end testing. Concrete examples included layered defense pipelines, adversarial robustness scaling laws, and probe-based deception detection. The field, he argued, already has tractable tools. It is just largely failing to use them.

- Rohin Shah (Google DeepMind) discussed how theoretical alignment work can be made more useful to empirical machine learning researchers. Shah argued that many theoretical proposals remain disconnected from the realities of training and deploying modern systems. Shah suggested that theoretical work is most impactful when it explores topics practitioners care about, produces testable predictions, offers concrete solutions, and accounts for practical requirements such as compute costs and implementation complexity.

- Gillian Hadfield (Johns Hopkins University) discussed the need for stronger regulatory capacity for AI oversight. She proposed Independent Verification Organizations (IVOs) that could evaluate whether AI systems meet safety standards and provide credible external assurance to regulators and the public. Hadfield made the case that we can draw on existing legal and economic architecture, such as tort liability, insurance markets, and procurement rules.

Main Talks

- Neel Nanda (Google DeepMind) presented work on “pragmatic interpretability” methods designed to reveal how models behave in concrete evaluation settings, rather than aiming for a perfect understanding of models’ internal reasoning.

- Zachary Kenton (Google DeepMind) discussed the relevance of scalable oversight approaches that allow human supervision to keep pace with increasingly capable AI systems.

- Vincent Conitzer (Carnegie Mellon University) made the case for using the “imperfect recall” framework from game theory to make safety tests indistinguishable from real deployment, preventing models from behaving differently when they are being evaluated.

- James Black (Johns Hopkins Center for Health Security) examined whether scientific AI systems can help generate novel biological risks and concluded that current safeguards are insufficient.

- Christopher Summerfield (University of Oxford, UK AISI) drew on 1970s ape language research to argue that current AI scheming research may mislead through methodological circularity.

- Matthieu Delescluse (EU AI Office) explained how the AI Act's Code of Practice creates enforceable requirements for frontier AI providers.

- Simon Möller (EU AI Office) mapped how the EU's AI Act Code of Practice is being implemented and where safety researchers can strengthen it.

- Marius Hobbhahn (Apollo Research) argued for the development of scaling laws for scheming behaviour that would identify training conditions that produce misaligned behavior before deployment.

- Joseph Bloom (UK AISI) argued that current disagreements about oversight are tractable and called for research that directly resolves cruxes. He warned that current AI oversight capabilities may degrade as systems evolve, making alignment and control substantially harder.

- Ryan Lowe (Meaning Alignment Institute) argued that AI alignment requires building coordination infrastructure in the form of institutions for multi-agent AI-human societies, not just aligning individual AGI systems.

- Robert Trager (Oxford Martin AI Governance Initiative) laid out a four layered framework for international governance of advanced AI with actionable red lines.

- Stephen Casper (MIT) demonstrated how ML researchers can directly shape policy by operationalizing vague legislative terms.

Lightning Talks

The workshop also featured a series of lightning talks highlighting emerging work across interpretability, evaluation, alignment theory, and governance.

- Victoria Krakovna (Google DeepMind) presented a honeypot-based method for detecting scheming in AI models.

- Usman Anwar (University of Cambridge) introduced a decision-theoretic framework for detecting steganographic communication for LLM Monitoring.

- Stefan Heimersheim (Google DeepMind) showed how training against interpretability-based deception detectors can backfire, using the Obfuscation Atlas — a taxonomy of strategies models use to evade them.

- Belinda Li (MIT) introduced a framework for training models to faithfully describe their own internal computations in natural language, with early evidence that these self-explanations are consistent, causally linked to behavior, and more accurate than explanations from external models.

- Konstantinos Voudouris (UK AISI) found that debate protocols for scalable oversight can backfire when debaters exploit systematic judge biases.

- Joachim Schaeffer (MATS) reported findings showing frontier LLMs can detect when their outputs have been edited.

- Edward Hughes (Google DeepMind) made the case that standard evaluation frameworks assume we know what we are looking for, and are thus unsuited for AI systems that do science.

- Aleksandr Bowkis (UK AISI) warned that even well-intentioned AI research agents can produce plausible-looking but wrong outputs that neither humans nor other agents can easily detect, with correlated errors compounding the problem.

- Andrew Gordon Wilson (New York University) introduced epiplexity, a new measure of structural information content that predicts out-of-distribution generalization.

- Rory Greig (Google DeepMind) showed that debate can reduce reward hacking in LLM-judge training by making it harder for models to manipulate supervisors.

- Lewis Hammond (Cooperative AI Foundation) introduced the concept of “agentic inequality”, arguing that differential access to AI agents could reshape the distribution of power at scale depending on how we build and deploy them.

- Matija Franklin (Google DeepMind) contended that environmental design of multi-agent economies is an important safety challenge. The research identifies critical safety mechanisms including incentive alignment, circuit breakers to prevent cascading failures, and clear permissions for different agents.

- Zhijing Jin (Max Planck Institute) identified blind spots in the field of AI safety research around democratic values, sociopolitical harms, and multi-agent dynamics as requiring independent, standards-oriented work that is possible in academic settings.

- Sören Mindermann (Mila) presented the International AI Safety Report 2026, a consensus assessment of frontier AI risks developed by 100+ experts.

- Jimmy Farrell (Pour Demain) argued that technical AI safety research is now policy-ready, with concrete mechanisms for researchers to engage with regulators, including the EU Scientific Panel and Advisory Forum.

- Robert McCarthy (UCL) warned exponential no-CoT capability growth will become a near-term concern within years. He analyzed threats to chain-of-thought monitoring, finding current favorable properties exist by default rather than by design.

- Kellin Pelrine (FAR.AI) howed that AI persuasion does not automatically favor truth, that this symmetry reflects design choices rather than inherent model properties, and that how the field responds will substantially determine the future of the epistemic ecosystem — potentially including, in the most extreme cases, the integrity of human oversight itself.

- Patricia Paskov (RAND) demonstrated that late-2025 AI models can reliably design DNA sequences for benchtop synthesis, lowering expertise barriers in biosecurity threat chains.

- Thomas Clarke (Faculty) contended that commercial AI safety work can drive systemic change by working directly with governments and frontier labs to create a safety race to the top rather than competitive pressure toward weaker standards

Looking Ahead

At the London Alignment Workshop, speakers highlighted the gap between the deployment of AI systems and existing governance frameworks. To close this gap, researchers suggested continued collaboration between technical experts and policymakers and the pursuit of theoretical work that generates testable predictions.

To advance these goals, FAR.AI is committed to hosting events that bring together AI safety researchers, representatives from industry, and government officials.

Upcoming events include Technical Innovations for AI Policy in Washington D.C. on March 30 and 31, 2026, and the next Alignment Workshop taking place in Seoul in July, 2026, co-located with ICML.

Full recordings are available on the FAR.AI YouTube Channel. Interested in future discussions? Submit your interest in the Alignment Workshop or other FAR.AI events.